Everything is Math

Vizualizing Math, Linear Alegra, And Matrix Multiplication in LLMs

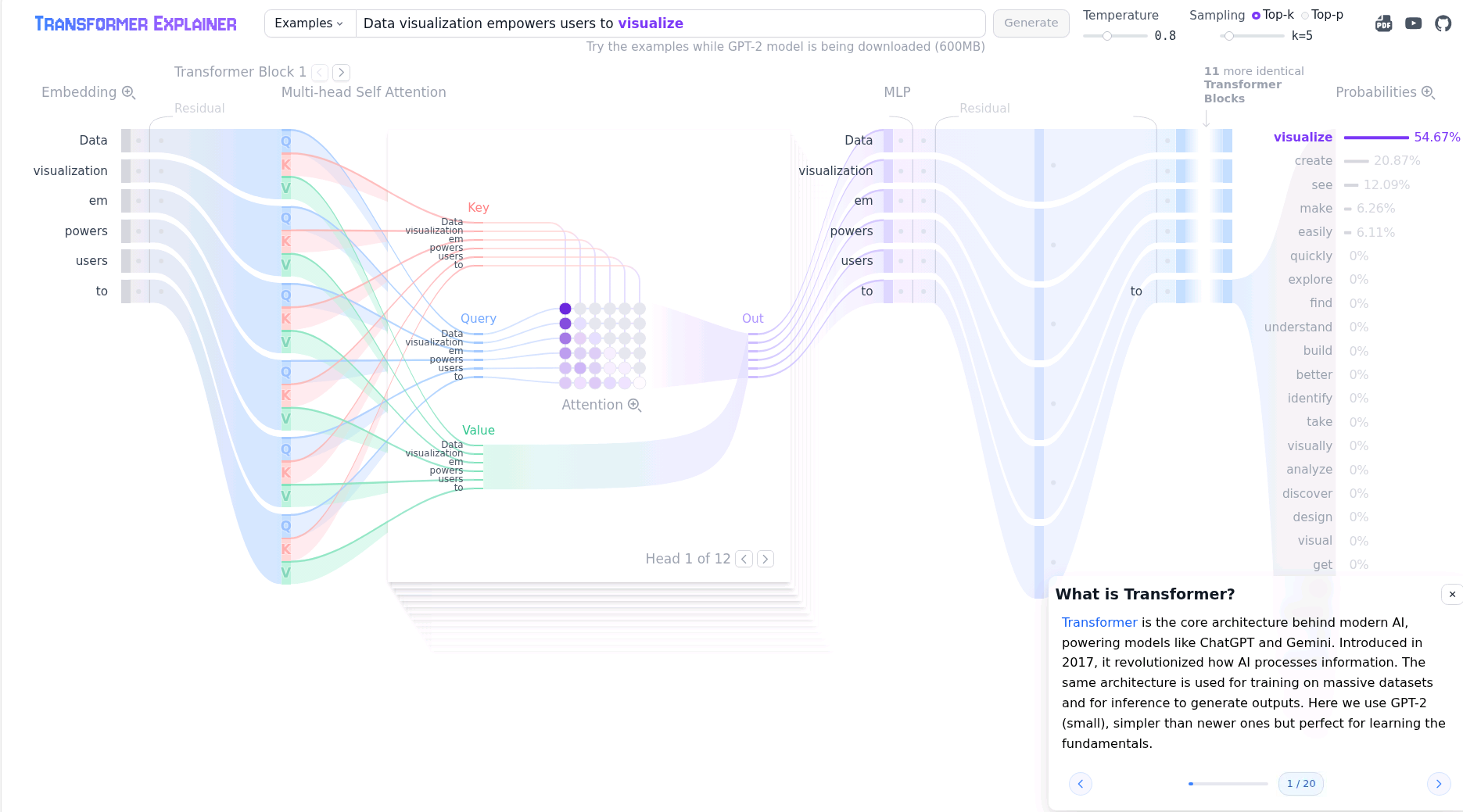

I recently was researching about how LLMs function and came across two very fun and interactive explanations that are screenshotted below. It further confirm in my head that everything is math. The generation of knowledge and words can be transformed into weighted matrixes with statistical outputs.

Edit 2026-02-09, found more resources https://mlu-explain.github.io/neural-networks/ https://threads.championswimmer.in/p/why-are-neural-networks-architected https://visualrambling.space/neural-network/

This post is licensed under

CC BY 4.0

by the author.